2026

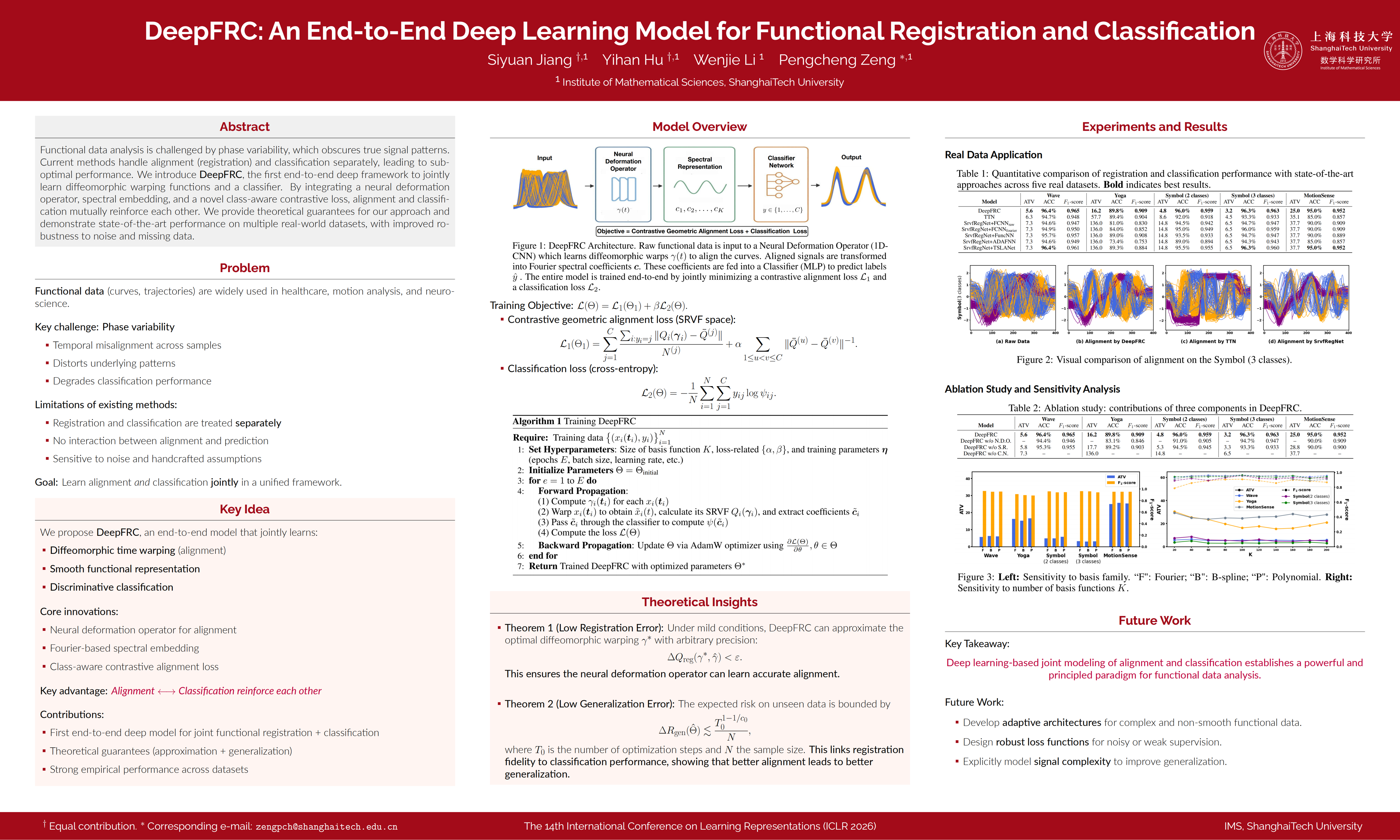

DeepFRC: An End-to-End Deep Learning Model for Functional Registration and Classification

Siyuan Jiang, Yihan Hu, Wenjie Li, Pengcheng Zeng

International Conference on Learning Representations (ICLR)

Abstract: Functional data, representing curves or trajectories, are ubiquitous in fields like biomedicine and motion analysis. A fundamental challenge is phase variability -- temporal misalignments that obscure underlying patterns and degrade model performance. Current methods often address registration (alignment) and classification as separate, sequential tasks. This paper introduces DeepFRC, an end-to-end deep learning framework that jointly learns diffeomorphic warping functions and a classifier within a unified architecture. DeepFRC combines a neural deformation operator for elastic alignment, a spectral representation using Fourier basis for smooth functional embedding, and a class-aware contrastive loss that promotes both intra-class coherence and inter-class separation. We provide the first theoretical guarantees for such a joint model, proving its ability to approximate optimal warpings and establishing a data-dependent generalization bound that formally links registration fidelity to classification performance. Extensive experiments on synthetic and real-world datasets demonstrate that DeepFRC consistently outperforms state-of-the-art methods in both alignment quality and classification accuracy, while ablation studies validate the synergy of its components. DeepFRC also shows notable robustness to noise, missing data, and varying dataset scales.

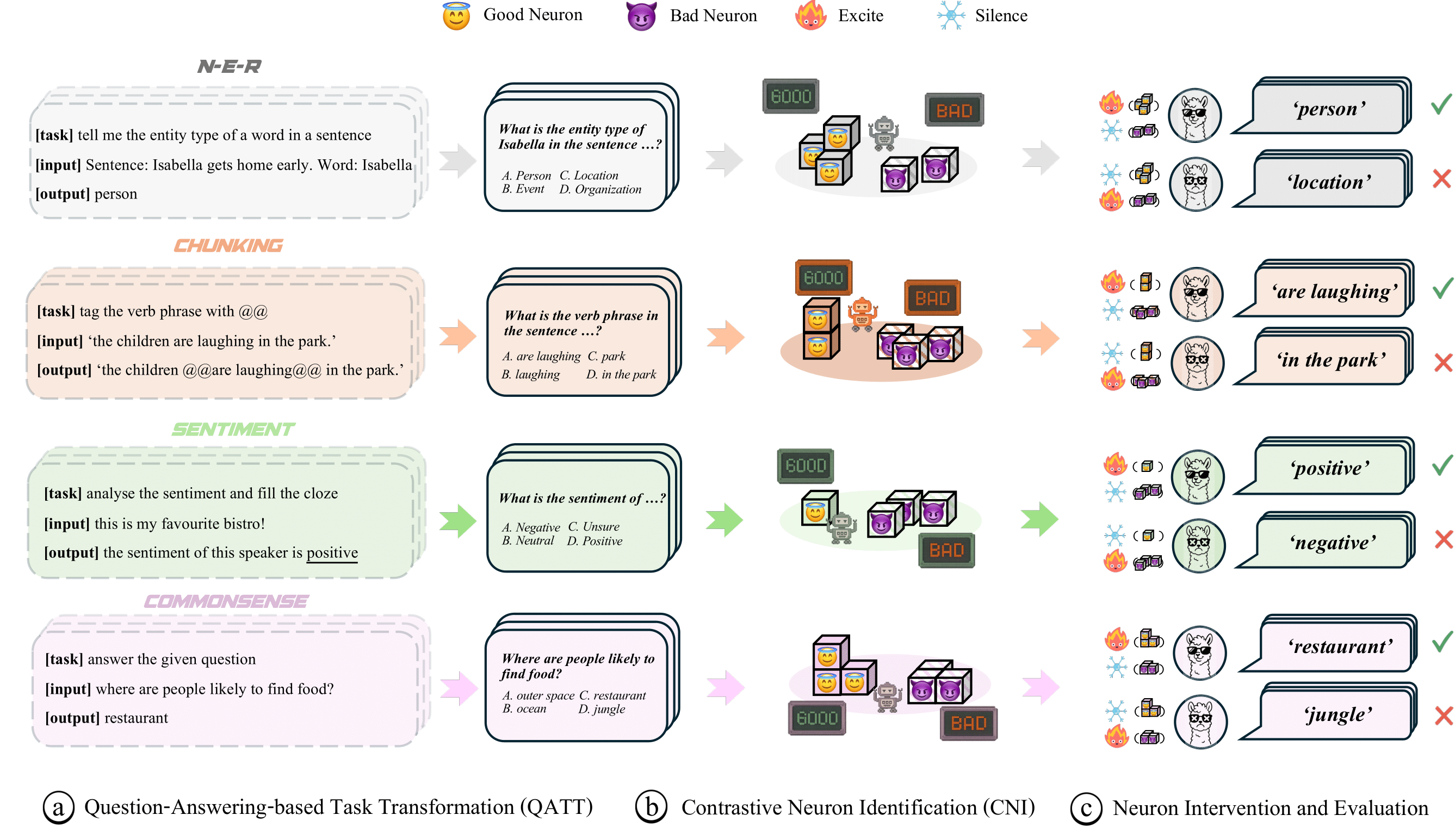

Identifying Good and Bad Neurons for Task-Level Controllable LLMs

Wenjie Li, Guansong Pang, Hezhe Qiao, Debin Gao, David Lo

In Submission

Abstract: Large Language Models (LLMs) have demonstrated remarkable capabilities on multiple-choice question answering benchmarks, but the complex mechanisms underlying their large-scale neurons remain opaque, posing significant challenges for understanding and steering LLMs. While recent studies made progress on identifying responsible neurons for certain abilities, these ability-specific methods are infeasible for task-focused scenarios requiring coordinated use of multiple abilities. Moreover, these approaches focus only on supportive neurons that correlate positively with task completion, while neglecting neurons with other roles—such as inhibitive roles—and misled neuron attribution due to fortuitous behaviors in LLMs (i.e., correctly answer the questions by chance rather than genuine understanding). To address these challenges, we propose NeuronLLM, a novel task-level LLM understanding framework that adopts the biological principle of functional antagonism for LLM neuron identification. The key insight is that task performance is jointly determined by neurons with two opposing roles: good neurons that facilitate task completion and bad neurons that inhibit it. NeuronLLM achieves a holistic modeling of neurons via contrastive learning of good and bad neurons, while leveraging augmented question sets to mitigate the fortuitous behaviors in LLMs. Comprehensive experiments on LLMs of different sizes and families show the superiority of NeuronLLM over existing methods in four NLP tasks, providing new insights into LLM functional organization.

2025

Delta-Influence: Identifying Poisons via Influence Functions

Wenjie Li, Jiawei Li, Pengcheng Zeng, Christian Schroeder de Witt, Ameya Prabhu, Amartya Sanyal

Transactions on Machine Learning Research (TMLR)

Abstract: Addressing data integrity challenges, such as unlearning the effects of data poisoning after model training, is necessary for the reliable deployment of machine learning models. State-of-the-art influence functions, such as EK-FAC, often fail to accurately attribute abnormal model behavior to the specific poisoned training data responsible for the data poisoning attack. In addition, traditional unlearning algorithms often struggle to effectively remove the influence of poisoned samples, particularly when only a few affected examples can be identified. To address these challenge, we introduce Δ-Influence, a novel approach that leverages influence functions to trace abnormal model behavior back to the responsible poisoned training data using as little as just one poisoned test example. Δ-Influence applies data transformations that sever the link between poisoned training data and compromised test points without significantly affecting clean data. This allows Δ-Influence to detect large negative shifts in influence scores following data transformations, a phenomenon we term as influence collapse, thereby accurately identifying poisoned training data. Unlearning this subset, e.g. through retraining, effectively eliminates the data poisoning. We validate our method across three vision-based poisoning attacks and three datasets, benchmarking against four detection algorithms and five unlearning strategies. We show that Δ-Influence consistently achieves the best unlearning across all settings, showing the promise of influence functions for corrective unlearning.

2024

GameBench: Evaluating Strategic Reasoning Abilities of LLM Agents

Anthony Costarelli, Mat Allen, Roman Hauksson, Grace Sodunke, Suhas Hariharan, Carlson Cheng, Wenjie Li, Joshua Clymer, Arjun Yadav

LanGame @ NeurIPS 2024

Abstract: Large language models have demonstrated remarkable few-shot performance on many natural language understanding tasks. Despite several demonstrations of using large language models in complex, strategic scenarios, there lacks a comprehensive framework for evaluating agents' performance across various types of reasoning found in games. To address this gap, we introduce GameBench, a cross-domain benchmark for evaluating strategic reasoning abilities of LLM agents. We focus on 9 different game environments, where each covers at least one axis of key reasoning skill identified in strategy games, and select games for which strategy explanations are unlikely to form a significant portion of models' pretraining corpuses. Our evaluations use GPT-3 and GPT-4 in their base form along with two scaffolding frameworks designed to enhance strategic reasoning ability: Chain-of-Thought (CoT) prompting and Reasoning Via Planning (RAP). Our results show that none of the tested models match human performance, and at worst GPT-4 performs worse than random action. CoT and RAP both improve scores but not comparable to human levels.